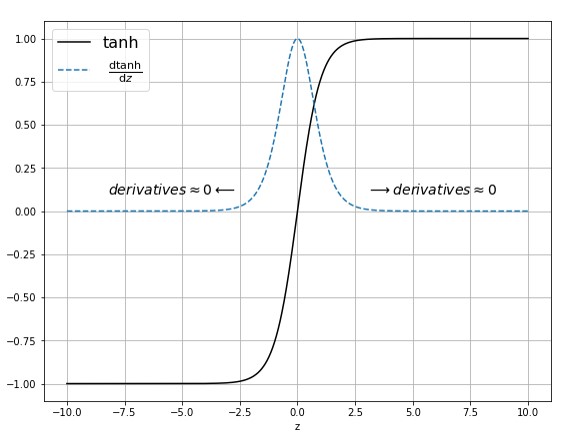

From the below images of Sigmoid & Tanh activation functions we can see that for the higher values(lower values) of Z (present in x axis where z = wx + b) derivative values are almost equal to zero or close to zero. So for the higher values of Z , we will have vanishing gradients…

Month: May 2019

Deep Learning with Pytorch -Sequence Modeling – Time Series Prediction – RNNs – 3.1

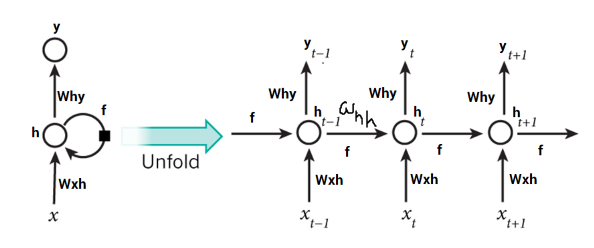

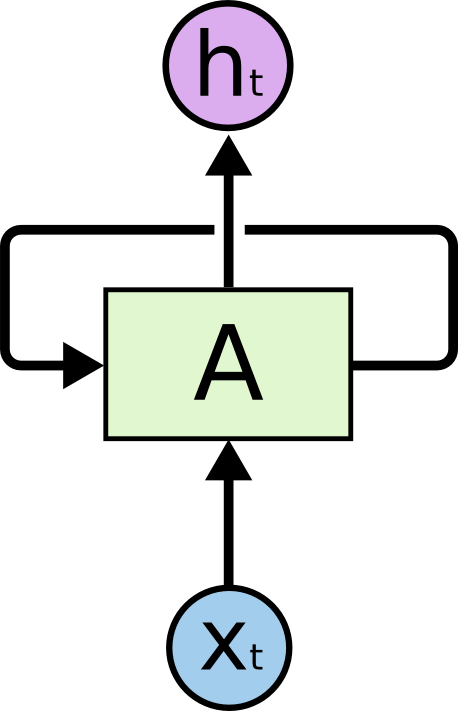

In the previous post of this series , we learnt about the intuition behind RNNs and we also tried to understood how we can use RNNs for sequential data like time series.In this post we will see a hands on implementation of RNNs in Pytorch.In case of neural networks we feed a vector as predictors…

Deep Learning with Pytorch -Sequence Modeling – Getting Started – RNN – 3.0

In CNN series , we came to know the limitations of MLPs how it can be solved with CNNs. Here we are getting started with another type of Neural Networks they are RNN(or Recurrent Neural Network). Some of the tasks that we can achieve with RNNs are given below – 1. Time Series Prediction (Stock…

Deep Learning with Pytorch -CNN – Transfer Learning – 2.2

Transfer learning is the process of transferring / applying your knowledge which you gathered from doing one task to another newly assigned task. One simple example is you pass on your skills/learning of riding a bicycle to the new learning process of riding a motor bike. Referring notes from cs231 course – In practice, very…